Transformation

Become who you are! That’s what we’ve been working on these past few months. Since our collaboration with the La Piscine accelerator last year, we’ve been focusing our efforts on designing turnkey hardware and software solutions for collective interactivity and immersion. Our first prototypes have allowed us to validate that the use of lightweight hardware makes it possible to build this innovative solution at low cost and with sustainable and ethical technologies.

The nuit Blanche

For the Nuit Blanche event on February 28, 2026, we developed the technological aspect of the video remix project for the National Film Board: visitors collaborated to create a short film from video archives. Using a mixing console and a giant screen, 60 films were created collaboratively. Once produced, each film was exported directly to the sound creation space to create a live soundtrack by artists from the University of Montreal.

credit JALQ Photography

For the public, interacting collectively with an installation means spending time together, creating bonds with other participants, artists, and content. The faces, sometimes concentrated, sometimes smiling, confirmed once again that collective interactivity adds a social dimension to the emotions produced by artistic works.

Our new technology

On the technological side, and to take collective interactivity further, the works must be equipped with technologies capable of sensing the group, both visually and acoustically. That’s why we are developing a suite of tracking solutions based on lightweight hardware and open-source software that we are developing ourselves. We presented it for the Alchimia competition during the E-AI conference. We are very happy to be selected among the winners!

Presentation during Alchimia

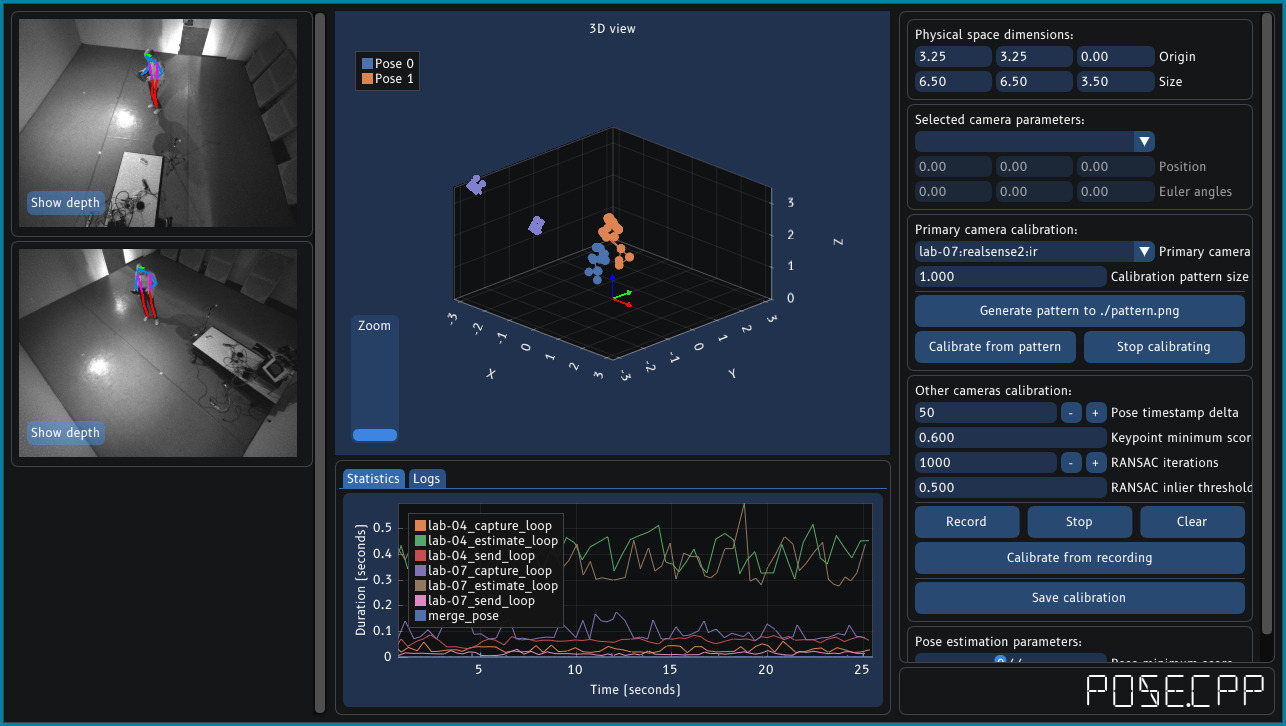

The first software for visual tracking is Pose.cpp. It supports multiple cameras deployed in a location, calibrates them to send live coordinates of people to a content creation tool. It works independently of the type of lighting and can be trained to detect objects to create specific interactions. In the image below, it detects a single person, but it is capable of capturing the coordinates of a group.

Pose.cpp user interface

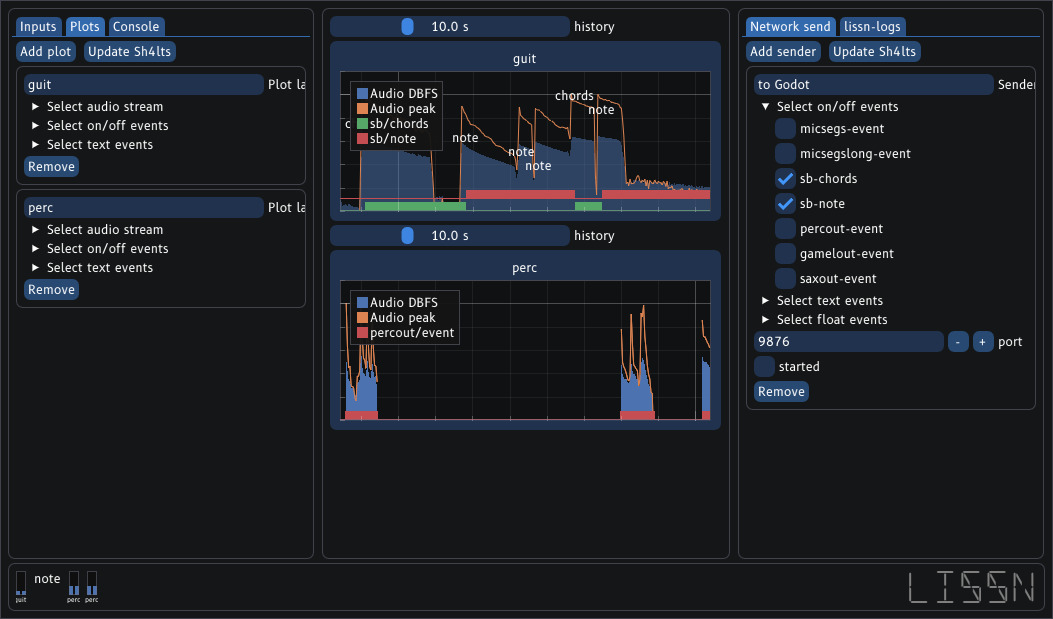

The second software performs sound tracking. It detects and filters speech, making it particularly responsive for applications that implement conversational interactions. It also analyzes musical characteristics and generates live soundtracks in harmony with ambient sounds, using sound banks. Like Pose.cpp, it can be trained to detect specific types of sounds and connects with content creation systems so that sound can also be a source of interactivity.

Lissn user interface

The team is growing

These tools, although functional, are still under development, particularly in terms of hardware development and implementation in several use cases. To this end, we are fortunate to have the help of Victor and Gabriel, two interns from Collège du Bois de Boulogne (Montreal), who are working on our secret use cases.

Finally

Writing is all well and good, but to see our demos, our immersive space, and our offices, we invite you to come visit us by contacting us at contact@lab148.ca.